test test…

What Is Artificial Intelligence AI?

Understanding The Recognition Pattern Of AI

With ML-powered image recognition, photos and captured video can more easily and efficiently be organized into categories that can lead to better accessibility, improved search and discovery, seamless content sharing, and more. Broadly speaking, visual search is the process of using real-world images to produce more reliable, accurate online searches. Visual search allows retailers to suggest items that thematically, stylistically, or otherwise relate to a given shopper’s behaviors and interests. Often referred to as “image classification” or “image labeling”, this core task is a foundational component in solving many computer vision-based machine learning problems. In retail, photo recognition tools have transformed how customers interact with products. Shoppers can upload a picture of a desired item, and the software will identify similar products available in the store.

These neural networks are programmatic structures modeled after the decision-making processes of the human brain. They consist of layers of interconnected nodes that extract features from the data and make predictions about what the data represents. The accuracy of image recognition depends on the quality of the algorithm and the data it was trained on. Advanced image recognition systems, especially those using deep learning, have achieved accuracy rates comparable to or even surpassing human levels in specific tasks. The performance can vary based on factors like image quality, algorithm sophistication, and training dataset comprehensiveness. Deep learning image recognition represents the pinnacle of image recognition technology.

A CNN, for instance, performs image analysis by processing an image pixel by pixel, learning to identify various features and objects present in an image. Deep learning is particularly effective at tasks like image and speech recognition and natural language processing, what is ai recognition making it a crucial component in the development and advancement of AI systems. This AI technology enables computers and systems to derive meaningful information from digital images, videos and other visual inputs, and based on those inputs, it can take action.

What are the types of image recognition?

AI is a concept that has been around formally since the 1950s when it was defined as a machine’s ability to perform a task that would’ve previously required human intelligence. This is quite a broad definition that has been modified over decades of research and technological advancements. AI has a range of applications with the potential to transform how we work and our daily lives. While many of these transformations are exciting, like self-driving cars, virtual assistants, or wearable devices in the healthcare industry, they also pose many challenges.

IDF uses AI facial recognition tech to identify terrorists in Gaza – All Israel News

IDF uses AI facial recognition tech to identify terrorists in Gaza.

Posted: Sun, 31 Mar 2024 05:27:28 GMT [source]

In general, traditional computer vision and pixel-based image recognition systems are very limited when it comes to scalability or the ability to re-use them in varying scenarios/locations. The real world also presents an array of challenges, including diverse lighting conditions, image qualities, and environmental factors that can significantly impact the performance of AI image recognition systems. While these systems may excel in controlled laboratory settings, their robustness in uncontrolled environments remains a challenge.

This dataset should be diverse and extensive, especially if the target image to see and recognize covers a broad range. Image recognition machine learning models thrive on rich data, which includes a variety of images or videos. When it comes to the use of image recognition, especially in the realm of medical image analysis, the role of CNNs is paramount. These networks, through supervised learning, have been trained on extensive image datasets. This training enables them to accurately detect and diagnose conditions from medical images, such as X-rays or MRI scans.

Object detection is generally more complex as it involves both identification and localization of objects. The ethical implications of facial recognition technology are also a significant area of discussion. As it comes to image recognition, particularly in facial recognition, there’s a delicate balance between privacy concerns and the benefits of this technology. The future of facial recognition, therefore, hinges not just on technological advancements but also on developing robust guidelines to govern its use.

This paper set the stage for AI research and development, and was the first proposal of the Turing test, a method used to assess machine intelligence. The term “artificial intelligence” was coined in 1956 by computer scientist John McCartchy in an academic conference at Dartmouth College. Generative AI tools, sometimes referred to as AI chatbots — including ChatGPT, Gemini, Claude and Grok — use artificial intelligence to produce written content in a range of formats, from essays to code and answers to simple questions.

What is the Difference Between Image Recognition and Object Detection?

Examples include Netflix’s recommendation engine and IBM’s Deep Blue (used to play chess). The weather models broadcasters rely on to make accurate forecasts consist of complex algorithms run on supercomputers. Machine-learning techniques enhance these models by making them more applicable and precise.

Repetitive tasks such as data entry and factory work, as well as customer service conversations, can all be automated using AI technology. Artificial intelligence allows machines to match, or even improve upon, the capabilities of the human mind. From the development of self-driving cars to the proliferation of generative AI tools, AI is increasingly becoming part of everyday life.

These learning algorithms are adept at recognizing complex patterns within an image, making them crucial for tasks like facial recognition, object detection within an image, and medical image analysis. Computer vision is another prevalent application of machine learning techniques, where machines process raw images, videos and visual media, and extract useful insights from them. Deep learning and convolutional neural networks are used to break down images into pixels and tag them accordingly, which helps computers discern the difference between visual shapes and patterns. Computer vision is used for image recognition, image classification and object detection, and completes tasks like facial recognition and detection in self-driving cars and robots.

While speech technology had a limited vocabulary in the early days, it is utilized in a wide number of industries today, such as automotive, technology, and healthcare. Its adoption has only continued to accelerate in recent years due to advancements in deep learning and big data. Research (link resides outside ibm.com) shows that this market is expected to be worth USD 24.9 billion by 2025.

We might see more sophisticated applications in areas like environmental monitoring, where image recognition can be used to track changes in ecosystems or to monitor wildlife populations. Additionally, as machine learning continues to evolve, the possibilities of what image recognition could achieve are boundless. We’re at a point where the question no longer is “if” image recognition can be applied to a particular problem, but “how” it will revolutionize the solution.

As the layers are interconnected, each layer depends on the results of the previous layer. Therefore, a huge dataset is essential to train a neural network so that the deep learning system leans to imitate the human reasoning process and continues to learn. For the object detection technique to work, the model must first be trained on various image datasets using deep learning methods. With image recognition, a machine can identify objects in a scene just as easily as a human can — and often faster and at a more granular level. And once a model has learned to recognize particular elements, it can be programmed to perform a particular action in response, making it an integral part of many tech sectors.

What are the Common Applications of Image Recognition?

They’re frequently trained using guided machine learning on millions of labeled images. As with many tasks that rely on human intuition and experimentation, however, someone eventually asked if a machine could do it better. Neural architecture search (NAS) uses optimization techniques to automate the process of neural network design. Given a goal (e.g model accuracy) and constraints (network size or runtime), these methods rearrange composible blocks of layers to form new architectures never before tested. Though NAS has found new architectures that beat out their human-designed peers, the process is incredibly computationally expensive, as each new variant needs to be trained.

Each is fed databases to learn what it should put out when presented with certain data during training. Tesla’s autopilot feature in its electric vehicles is probably what most people think of when considering self-driving cars. Still, Waymo, from Google’s parent company, Alphabet, makes autonomous rides, like a taxi without a taxi driver, in San Francisco, CA, and Phoenix, AZ. In DeepLearning.AI’s AI For Good Specialization, meanwhile, you’ll build skills combining human and machine intelligence for positive real-world impact using AI in a beginner-friendly, three-course program.

Image recognition, photo recognition, and picture recognition are terms that are used interchangeably. Whether you’re a developer, a researcher, or an enthusiast, you now have the opportunity to harness this incredible technology and shape the future. With Cloudinary as your assistant, you can expand the boundaries of what is achievable in your applications and websites. You can streamline your workflow process and deliver visually appealing, optimized images to your audience. Suppose you wanted to train a machine-learning model to recognize and differentiate images of circles and squares.

It will most likely say it’s 77% dog, 21% cat, and 2% donut, which is something referred to as confidence score. It’s there when you unlock a phone with your face or when you look for the photos of your pet in Google Photos. It can be big in life-saving applications like self-driving cars and diagnostic healthcare. But it also can be small and funny, like in that notorious photo recognition app that lets you identify wines by taking a picture of the label.

This can involve using custom algorithms or modifications to existing algorithms to improve their performance on images (e.g., model retraining). One of the foremost concerns in AI image recognition is the delicate balance between innovation and safeguarding individuals’ privacy. As these systems become increasingly adept at analyzing visual data, there’s a growing need to ensure that the rights and privacy of individuals are respected.

AI works to advance healthcare by accelerating medical diagnoses, drug discovery and development and medical robot implementation throughout hospitals and care centers. AI is changing the game for cybersecurity, analyzing massive quantities of risk data Chat PG to speed response times and augment under-resourced security operations. Google Photos already employs this functionality, helping users organize photos by places, objects within those photos, people, and more—all without requiring any manual tagging.

Machine learning and deep learning are sub-disciplines of AI, and deep learning is a sub-discipline of machine learning. To see just how small you can make these networks with good results, check out this post on creating a tiny image recognition model for mobile devices. You can tell that it is, in fact, a dog; but an image recognition algorithm works differently.

With the help of rear-facing cameras, sensors, and LiDAR, images generated are compared with the dataset using the image recognition software. It helps accurately detect other vehicles, traffic lights, lanes, pedestrians, and more. The image recognition technology helps you spot objects of interest in a selected portion of an image. Visual search works first by identifying objects in an image and comparing them with images on the web. Unlike ML, where the input data is analyzed using algorithms, deep learning uses a layered neural network. The information input is received by the input layer, processed by the hidden layer, and results generated by the output layer.

To work, a generative AI model is fed massive data sets and trained to identify patterns within them, then subsequently generates outputs that resemble this training data. Early examples of models, including GPT-3, BERT, or DALL-E 2, have shown what’s possible. In the future, models will be trained on a broad set of unlabeled data that can be used for different tasks, with minimal fine-tuning. Systems that execute specific tasks in a single domain are giving way to broad AI systems that learn more generally and work across domains and problems. Foundation models, trained on large, unlabeled datasets and fine-tuned for an array of applications, are driving this shift.

It then combines the feature maps obtained from processing the image at the different aspect ratios to naturally handle objects of varying sizes. While AI-powered image recognition offers a multitude of advantages, it is not without its share of challenges. In recent years, the field of AI has made remarkable strides, with image recognition emerging as a testament to its potential. While it has been around for a number of years prior, recent advancements have made image recognition more accurate and accessible to a broader audience.

This is particularly evident in applications like image recognition and object detection in security. The objects in the image are identified, ensuring the efficiency of these applications. Image recognition, an integral component of computer vision, represents a fascinating facet of AI. It involves the use of algorithms to allow machines to interpret and understand visual data from the digital world.

- Most image recognition models are benchmarked using common accuracy metrics on common datasets.

- In this article, you’ll learn more about artificial intelligence, what it actually does, and different types of it.

- This is quite a broad definition that has been modified over decades of research and technological advancements.

- Human beings have the innate ability to distinguish and precisely identify objects, people, animals, and places from photographs.

- Image recognition, photo recognition, and picture recognition are terms that are used interchangeably.

(2008) Google makes breakthroughs in speech recognition and introduces the feature in its iPhone app. (1985) Companies are spending more than a billion dollars a year on expert systems and an entire industry known as the Lisp machine market springs up to support them. Companies like Symbolics and Lisp Machines Inc. build specialized computers to run on the AI programming language Lisp. (1964) Daniel Bobrow develops STUDENT, an early natural language processing program designed to solve algebra word problems, as a doctoral candidate at MIT.

You can foun additiona information about ai customer service and artificial intelligence and NLP. The neural network learned to recognize a cat without being told what a cat is, ushering in the breakthrough era for neural networks and deep learning funding. The primary approach to building AI systems is through machine learning (ML), where computers learn from large datasets by identifying patterns and relationships within the data. A machine learning algorithm uses statistical techniques to help it “learn” how to get progressively better at a task, without necessarily having been programmed for that certain task.

Image recognition is used to perform many machine-based visual tasks, such as labeling the content of images with meta tags, performing image content search and guiding autonomous robots, self-driving cars and accident-avoidance systems. Typically, image recognition entails building deep neural networks that analyze each image pixel. These networks are fed as many labeled images as possible to train them to recognize related images. Given the simplicity of the task, it’s common for new neural network architectures to be tested on image recognition problems and then applied to other areas, like object detection or image segmentation. This section will cover a few major neural network architectures developed over the years. Face recognition technology, a specialized form of image recognition, is becoming increasingly prevalent in various sectors.

Despite being 50 to 500X smaller than AlexNet (depending on the level of compression), SqueezeNet achieves similar levels of accuracy as AlexNet. This feat is possible thanks to a combination of residual-like layer blocks and careful attention to the size and shape of convolutions. SqueezeNet is a great choice for anyone training a model with limited compute resources or for deployment on embedded or edge devices. ResNets, short for residual networks, solved this problem with a clever bit of architecture. Blocks of layers are split into two paths, with one undergoing more operations than the other, before both are merged back together.

In fact, in just a few years we might come to take the recognition pattern of AI for granted and not even consider it to be AI. Most image recognition models are benchmarked using common accuracy metrics on common datasets. Top-1 accuracy refers to the fraction of images for which the model output class with the highest confidence score is equal to the true label of the image. Top-5 accuracy refers to the fraction of images for which the true label falls in the set of model outputs with the top 5 highest confidence scores.

The image recognition system also helps detect text from images and convert it into a machine-readable format using optical character recognition. According to Fortune Business Insights, the market size of global image recognition technology was valued at $23.8 billion in 2019. This figure is expected to skyrocket to $86.3 billion by 2027, growing at a 17.6% CAGR during the said period.

The customizability of image recognition allows it to be used in conjunction with multiple software programs. For example, after an image recognition program is specialized to detect people in a video frame, it can be used for people counting, a popular computer vision application in retail stores. Over time, AI systems improve on their performance of specific tasks, allowing them to adapt to new inputs and make decisions without being explicitly programmed to do so. In essence, artificial intelligence is about teaching machines to think and learn like humans, with the goal of automating work and solving problems more efficiently. Artificial intelligence (AI) is a wide-ranging branch of computer science that aims to build machines capable of performing tasks that typically require human intelligence. While AI is an interdisciplinary science with multiple approaches, advancements in machine learning and deep learning, in particular, are creating a paradigm shift in virtually every industry.

Previously humans would have to laboriously catalog each individual image according to all its attributes, tags, and categories. This is a great place for AI to step in and be able to do the task much faster and much more efficiently than a human worker who is going to get tired out or bored. Not to mention these systems can avoid human error and allow for workers to be doing things of more value. In terms of development, facial recognition is an application where image recognition uses deep learning models to improve accuracy and efficiency.

Still, some examples of the power of narrow AI include voice assistants, image-recognition systems, technologies that respond to simple customer service requests, and tools that flag inappropriate content online. Weak AI, meanwhile, refers to the narrow use of widely available AI technology, like machine learning or deep learning, to perform very specific tasks, such as playing chess, recommending songs, or steering cars. Also known as Artificial Narrow Intelligence (ANI), weak AI is essentially the kind of AI we use daily. Artificial intelligence aims to provide machines with similar processing and analysis capabilities as humans, making AI a useful counterpart to people in everyday life.

(2018) Google releases natural language processing engine BERT, reducing barriers in translation and understanding by ML applications. This became the catalyst for the AI boom, and the basis on which image recognition grew. (1966) MIT professor Joseph Weizenbaum creates Eliza, one of the first chatbots to successfully mimic the conversational patterns of users, creating the illusion that it understood more than it did. This introduced the Eliza effect, a common phenomenon where people falsely attribute humanlike thought processes and emotions to AI systems.

Deep learning image recognition software allows tumor monitoring across time, for example, to detect abnormalities in breast cancer scans. If you don’t want to start from scratch and use pre-configured infrastructure, you might want to check out our computer vision platform Viso Suite. The enterprise suite provides the popular open-source image recognition software out of the box, with over 60 of the best pre-trained models. It also provides data collection, image labeling, and deployment to edge devices – everything out-of-the-box and with no-code capabilities. When it comes to image recognition, Python is the programming language of choice for most data scientists and computer vision engineers.

The possibility of artificially intelligent systems replacing a considerable chunk of modern labor is a credible near-future possibility. The tech giant uses GPT-4 in Copilot, its AI chatbot formerly known as Bing chat, and in a more advanced version of Dall-E 3 to generate images through Microsoft Designer. Google had a rough start in the AI chatbot race https://chat.openai.com/ with an underperforming tool called Google Bard, originally powered by LaMDA. The company then switched the LLM behind Bard twice — the first time for PaLM 2, and then for Gemini, the LLM currently powering it. GPT stands for Generative Pre-trained Transformer, and GPT-3 was the largest language model at its 2020 launch, with 175 billion parameters.…

Tips for Overcoming Natural Language Processing Challenges

Unlocking the potential of natural language processing: Opportunities and challenges

There is ambiguity in natural language since same words and phrases can have different meanings and different context. Machine learning requires A LOT of data to function to its outer limits – billions of pieces of training data. That said, data (and human language!) is only growing by the day, as are new machine learning techniques and custom algorithms. All of the problems above will require more research and new techniques in order to improve on them. Natural language processing (NLP) is the ability of a computer to analyze and understand human language.

The field of NLP is related with different theories and techniques that deal with the problem of natural language of communicating with the computers. Some of these tasks have direct real-world applications such as Machine translation, Named entity recognition, Optical character recognition etc. Though NLP tasks are obviously very closely interwoven but they are used frequently, for convenience. Some of the tasks https://chat.openai.com/ such as automatic summarization, co-reference analysis etc. act as subtasks that are used in solving larger tasks. Nowadays NLP is in the talks because of various applications and recent developments although in the late 1940s the term wasn’t even in existence. So, it will be interesting to know about the history of NLP, the progress so far has been made and some of the ongoing projects by making use of NLP.

Patterns matching the state-switch sequence are most likely to have generated a particular output-symbol sequence. Training the output-symbol chain data, reckon the state-switch/output probabilities that fit this data best. The objective of this section is to present the various datasets used in NLP and some state-of-the-art models in NLP. There is a system called MITA (Metlife’s Intelligent Text Analyzer) (Glasgow et al. (1998) [48]) that extracts information from life insurance applications. Ahonen et al. (1998) [1] suggested a mainstream framework for text mining that uses pragmatic and discourse level analyses of text.

Only the introduction of hidden Markov models, applied to part-of-speech tagging, announced the end of the old rule-based approach. This is particularly important for analysing sentiment, where accurate analysis enables service agents to prioritise which dissatisfied customers to help first or which customers to extend promotional offers to. Managing and delivering mission-critical customer knowledge is also essential for successful Customer Service. Sentiment analysis is another way companies could use NLP in their operations.

The goal is to create an NLP system that can identify its limitations and clear up confusion by using questions or hints. An HMM is a system where a shifting takes place between several states, generating feasible output symbols with each switch. The sets of viable states and unique symbols may be large, but finite and known. Few of the problems could be solved by Inference A certain sequence of output symbols, compute the probabilities of one or more candidate states with sequences.

- The National Library of Medicine is developing The Specialist System [78,79,80, 82, 84].

- Natural Language is pragmatics which means that how language can be used in context to approach communication goals.

- The extracted information can be applied for a variety of purposes, for example to prepare a summary, to build databases, identify keywords, classifying text items according to some pre-defined categories etc.

We first give insights on some of the mentioned tools and relevant work done before moving to the broad applications of NLP. NLP can be classified into two parts i.e., Natural Language Understanding and Natural Language Generation which evolves the task to understand and generate the text. The objective of this section is to discuss the Natural Language Understanding (Linguistic) (NLU) and the Natural Language Generation (NLG).

Generative methods can generate synthetic data because of which they create rich models of probability distributions. Discriminative methods are more functional and have right estimating posterior probabilities and are based on observations. Srihari [129] explains the different generative models as one with a resemblance that is used to spot an unknown speaker’s language and would bid the deep knowledge of numerous languages to perform the match. Discriminative methods rely on a less knowledge-intensive approach and using distinction between languages.

You can foun additiona information about ai customer service and artificial intelligence and NLP. They do this by looking at the context of your sentence instead of just the words themselves. Considering these metrics in mind, it helps to evaluate the performance of an NLP model for a particular task or a variety of tasks. The objective of this section is to discuss evaluation metrics used to evaluate the model’s performance and involved challenges.

But once it learns the semantic relations and inferences of the question, it will be able to automatically perform the filtering and formulation necessary to provide an intelligible answer, rather than simply showing you data. The extracted information can be applied for a variety of purposes, for example to prepare a summary, to build databases, identify keywords, classifying text items according to some pre-defined categories etc. For example, CONSTRUE, it was developed for Reuters, that is used in classifying news stories (Hayes, 1992) [54]. It has been suggested that many IE systems can successfully extract terms from documents, acquiring relations between the terms is still a difficulty. PROMETHEE is a system that extracts lexico-syntactic patterns relative to a specific conceptual relation (Morin,1999) [89].

With its ability to understand human behavior and act accordingly, AI has already become an integral part of our daily lives. The use of AI has evolved, with the latest wave being natural language processing (NLP). In the recent past, models dealing with Visual Commonsense Reasoning [31] and NLP have also been getting attention of the several researchers and seems a promising and challenging area to work upon. Merity et al. [86] extended conventional word-level language models based on Quasi-Recurrent Neural Network and LSTM to handle the granularity at character and word level.

Understanding these challenges helps you explore the advanced NLP but also leverages its capabilities to revolutionize How we interact with machines and everything from customer service automation to complicated data analysis. Essentially, NLP systems attempt to analyze, and in many cases, “understand” human language. It can identify that a customer is making a request for a weather forecast, but the location (i.e. entity) is misspelled in this example.

The use of NLP has become more prevalent in recent years as technology has advanced. Personal Digital Assistant applications such as Google Home, Siri, Cortana, and Alexa have all been updated with NLP capabilities. Speech recognition is an excellent example of how NLP can be used to improve the customer experience. It is a very common requirement for businesses to have IVR systems in place so that customers can interact with their products and services without having to speak to a live person.

When a customer asks for several things at the same time, such as different products, boost.ai’s conversational AI can easily distinguish between the multiple variables. We believe in solving complex business challenges of the converging world, by using cutting-edge technologies. Establish feedback mechanisms to gather insights from users of the NLP system. Use this feedback to make adaptive changes, ensuring the solution remains effective and aligned with business goals. Standardize data formats and structures to facilitate easier integration and processing. Here’s a look at how to effectively implement NLP solutions, overcome data integration challenges, and measure the success and ROI of such initiatives.

SaaS text analysis platforms, like MonkeyLearn, allow users to train their own machine learning NLP models, often in just a few steps, which can greatly ease many of the NLP processing limitations above. The first objective gives insights of the various important terminologies of NLP and NLG, and can be useful for the readers interested to start their early career in NLP and work relevant to its applications. The second objective of this paper focuses on the history, applications, and recent developments in the field of NLP. The third objective is to discuss datasets, approaches and evaluation metrics used in NLP.

Six challenges in NLP and NLU – and how boost.ai solves them

In case of syntactic level ambiguity, one sentence can be parsed into multiple syntactical forms. Lexical level ambiguity refers to ambiguity of a single word that can have multiple assertions. Each of these levels can produce ambiguities that can be solved by the knowledge of the complete sentence.

How much can it actually understand what a difficult user says, and what can be done to keep the conversation going? These are some of the questions every company should ask before deciding on how to automate customer interactions. The sixth and final step to overcome NLP challenges is to be ethical and responsible in your NLP projects and applications. NLP can have a huge impact on society and individuals, both positively and negatively.

The third step to overcome NLP challenges is to experiment with different models and algorithms for your project. There are many types of NLP models, such as rule-based, statistical, neural, and hybrid models, that have different strengths and weaknesses. For example, rule-based models are good for simple and structured tasks, but they require a lot of manual effort and domain knowledge.

Challenges and Considerations in Natural Language Processing

This could be useful for content moderation and content translation companies. This use case involves extracting information from unstructured data, such as text and images. NLP can be used to identify the most relevant parts of those documents and present them in an organized manner. Seunghak et al. [158] designed a Memory-Augmented-Machine-Comprehension-Network (MAMCN) to handle dependencies faced in reading comprehension. The model achieved state-of-the-art performance on document-level using TriviaQA and QUASAR-T datasets, and paragraph-level using SQuAD datasets. First, it understands that “boat” is something the customer wants to know more about, but it’s too vague.

But later, some MT production systems were providing output to their customers (Hutchins, 1986) [60]. By this time, work on the use of computers for literary and linguistic studies had also started. As early as 1960, signature work influenced by AI began, with the BASEBALL Q-A systems (Green et al., 1961) [51]. LUNAR (Woods,1978) [152] and Winograd SHRDLU were natural successors of these systems, but they were seen as stepped-up sophistication, in terms of their linguistic and their task processing capabilities.

Tools such as ChatGPT, Google Bard that trained on large corpus of test of data uses Natural Language Processing technique to solve the user queries. Ties with cognitive linguistics are part of the historical heritage of NLP, but they have been less frequently addressed since the statistical turn during the 1990s. Ambiguity is one of the major problems of natural language which occurs when one sentence can lead to different interpretations.

Conversational AI / Chatbot

There is rich semantic content in human language that allows speaker to convey a wide range of meaning through words and sentences. Natural Language is pragmatics which means that how language can be used in context to approach communication goals. The human language evolves time to time with the processes such as lexical change. Natural Language Processing technique is used in machine translation, healthcare, finance, customer service, sentiment analysis and extracting valuable information from the text data. Many companies uses Natural Language Processing technique to solve their text related problems.

BERT provides contextual embedding for each word present in the text unlike context-free models (word2vec and GloVe). Muller et al. [90] used the BERT model to analyze the tweets on covid-19 content. The use of the BERT model in the legal domain was explored by Chalkidis et al. [20]. A language can be defined as a set of rules or set of symbols where symbols are combined and used for conveying information or broadcasting the information. Since all the users may not be well-versed in machine specific language, Natural Language Processing (NLP) caters those users who do not have enough time to learn new languages or get perfection in it.

Conversational AI can extrapolate which of the important words in any given sentence are most relevant to a user’s query and deliver the desired outcome with minimal confusion. In the first sentence, the ‘How’ is important, and the conversational AI understands that, letting the digital advisor respond correctly. In the second example, ‘How’ has little to no value and it understands that the user’s need to make changes to their account is the essence of the question.

Sometimes it’s hard even for another human being to parse out what someone means when they say something ambiguous. There may not be a clear concise meaning to be found in a strict analysis of their words. In order to resolve this, an NLP system must be able to seek context to help it understand the phrasing. To be sufficiently trained, an AI must typically review millions of data points. Processing all those data can take lifetimes if you’re using an insufficiently powered PC. However, with a distributed deep learning model and multiple GPUs working in coordination, you can trim down that training time to just a few hours.

The Pilot earpiece is connected via Bluetooth to the Pilot speech translation app, which uses speech recognition, machine translation and machine learning and speech synthesis technology. Simultaneously, the user will hear the translated version of the speech on the second earpiece. Moreover, it is not necessary that conversation would be taking place between two people; only the users can join in and discuss as a group. As if now the user may experience a few second lag interpolated the speech and translation, which Waverly Labs pursue to reduce. The Pilot earpiece will be available from September but can be pre-ordered now for $249.

By using spell correction on the sentence, and approaching entity extraction with machine learning, it’s still able to understand the request and provide correct service. The fourth step to overcome NLP challenges is to evaluate your results and measure your performance. There are many metrics and methods to evaluate NLP models and applications, such as accuracy, precision, recall, F1-score, BLEU, ROUGE, perplexity, and more. However, these metrics may not always reflect the real-world quality and usefulness of your NLP outputs. Therefore, you should also consider using human evaluation, user feedback, error analysis, and ablation studies to assess your results and identify the areas of improvement.

More relevant reading

Our dedicated development team has strong experience in designing, managing, and offering outstanding NLP services. Different languages have not only vastly different sets of vocabulary, but also different types of phrasing, different modes of inflection, and different cultural expectations. You can resolve this issue with the help of “universal” models that can transfer at least some learning to other languages. However, you’ll still need to spend time retraining your NLP system for each language.

It also helps to quickly find relevant information from databases containing millions of documents in seconds. An NLP-generated document accurately summarizes any original text that humans can’t automatically generate. Also, it can carry out repetitive tasks such as analyzing large chunks of data to improve human efficiency. Fan et al. [41] introduced a gradient-based neural architecture search algorithm that automatically finds architecture with better performance than a transformer, conventional NMT models. The GUI for conversational AI should give you the tools for deeper control over extract variables, and give you the ability to determine the flow of a conversation based on user input – which you can then customize to provide additional services.

Addressing these challenges requires not only technological innovation but also a multidisciplinary approach that considers linguistic, cultural, ethical, and practical aspects. As NLP continues to evolve, these considerations will play a critical role in shaping the future of how machines understand and interact with human language. Most higher-level NLP applications involve aspects that emulate intelligent behaviour and apparent comprehension of natural language.

The third objective of this paper is on datasets, approaches, evaluation metrics and involved challenges in NLP. Section 2 deals with the first objective mentioning the various important terminologies of NLP and NLG. Section 3 deals with the history of NLP, applications of NLP and a walkthrough of the recent developments. Datasets used in NLP and various approaches are presented in Section 4, and Section 5 is written on evaluation metrics and challenges involved in NLP.

As most of the world is online, the task of making data accessible and available to all is a challenge. There are a multitude of languages with different sentence structure and grammar. Machine Translation is generally translating phrases from one language to another with the help of a statistical engine like Google Translate. The challenge with machine translation technologies is not directly translating words but keeping the meaning of sentences intact along with grammar and tenses. In recent years, various methods have been proposed to automatically evaluate machine translation quality by comparing hypothesis translations with reference translations.

These are easy for humans to understand because we read the context of the sentence and we understand all of the different definitions. And, while NLP language models may have learned all of the definitions, differentiating between them in context can present problems. The earliest decision trees, producing systems of hard if–then rules, were still very similar to the old rule-based approaches.

Statistical and machine learning entail evolution of algorithms that allow a program to infer patterns. An iterative process is used to characterize a given algorithm’s underlying algorithm that is optimized by a numerical measure that characterizes numerical parameters and learning phase. Machine-learning models can be predominantly categorized as either generative or discriminative.

Various researchers (Sha and Pereira, 2003; McDonald et al., 2005; Sun et al., 2008) [83, 122, 130] used CoNLL test data for chunking and used features composed of words, POS tags, and tags. No language is perfect, and most languages have words that have multiple meanings. For example, a user who asks, “how are you” has a totally different goal than a user who asks something like “how do I add a new credit card? ” Good NLP tools should be able to differentiate between these phrases with the help of context. A human being must be immersed in a language constantly for a period of years to become fluent in it; even the best AI must also spend a significant amount of time reading, listening to, and utilizing a language.

The software would analyze social media posts about a business or product to determine whether people think positively or negatively about it. NLP can be used in chatbots and computer programs that use artificial intelligence to communicate with people through text or voice. The chatbot uses NLP to understand what the person is typing and respond appropriately.

These could include metrics like increased customer satisfaction, time saved in data processing, or improvements in content engagement. This is where training and regularly updating custom models can be helpful, although it oftentimes requires quite a lot of data. The same words and phrases can have different meanings according the context of a sentence and many words – especially in English – have the exact same pronunciation but totally different meanings. We can apply another pre-processing technique called stemming to reduce words to their “word stem”. For example, words like “assignee”, “assignment”, and “assigning” all share the same word stem– “assign”.

IE systems should work at many levels, from word recognition to discourse analysis at the level of the complete document. An application of the Blank Slate Language Processor (BSLP) (Bondale et al., 1999) [16] approach for the analysis of a real-life natural language corpus that consists of responses to open-ended questionnaires in the field of advertising. The first step to overcome NLP challenges is to understand your data and its characteristics.

- In the second example, ‘How’ has little to no value and it understands that the user’s need to make changes to their account is the essence of the question.

- The model achieved state-of-the-art performance on document-level using TriviaQA and QUASAR-T datasets, and paragraph-level using SQuAD datasets.

- CapitalOne claims that Eno is First natural language SMS chatbot from a U.S. bank that allows customers to ask questions using natural language.

- It’s a process of extracting named entities from unstructured text into predefined categories.

- Give this NLP sentiment analyzer a spin to see how NLP automatically understands and analyzes sentiments in text (Positive, Neutral, Negative).

” is interpreted to “Asking for the current time” in semantic analysis whereas in pragmatic analysis, the same sentence may refer to “expressing resentment to someone who missed the due time” in pragmatic analysis. Thus, semantic analysis is the study of the relationship between various linguistic utterances and their meanings, but pragmatic analysis is the study of context which influences our understanding of linguistic expressions. Pragmatic analysis helps users to uncover the intended meaning of the text by applying contextual background knowledge. Rationalist approach or symbolic approach assumes that a crucial part of the knowledge in the human mind is not derived by the senses but is firm in advance, probably by genetic inheritance. It was believed that machines can be made to function like the human brain by giving some fundamental knowledge and reasoning mechanism linguistics knowledge is directly encoded in rule or other forms of representation.

It’s task was to implement a robust and multilingual system able to analyze/comprehend medical sentences, and to preserve a knowledge of free text into a language independent knowledge representation [107, 108]. Building an NLP models that can maintain the context throughout a conversation. The understanding of context enables systems to interpret user intent, conversation history tracking, and generating relevant responses based on the ongoing dialogue. Apply intent recognition algorithm to find the underlying goals and intentions expressed by users in their messages.

Peter Wallqvist, CSO at RAVN Systems commented, “GDPR compliance is of universal paramountcy as it will be exploited by any organization that controls and processes data concerning EU citizens. The Linguistic String Project-Medical Language Processor is one the large scale projects of NLP in Chat PG the field of medicine [21, 53, 57, 71, 114]. The LSP-MLP helps enabling physicians to extract and summarize information of any signs or symptoms, drug dosage and response data with the aim of identifying possible side effects of any medicine while highlighting or flagging data items [114].

Linguistics is the science which involves the meaning of language, language context and various forms of the language. So, it is important to understand various important terminologies of NLP and different levels of NLP. We next discuss some of the commonly used terminologies in different levels of NLP.

Breaking Down 3 Types of Healthcare Natural Language Processing – HealthITAnalytics.com

Breaking Down 3 Types of Healthcare Natural Language Processing.

Posted: Wed, 20 Sep 2023 07:00:00 GMT [source]

The Robot uses AI techniques to automatically analyze documents and other types of data in any business system which is subject to GDPR rules. It allows users to search, retrieve, flag, classify, and report on data, mediated to be super sensitive under GDPR quickly and easily. Users also can identify personal data from documents, view feeds on the latest personal data that requires attention and provide reports on the data suggested to be deleted or secured. RAVN’s GDPR Robot is also able to hasten requests for information (Data Subject Access Requests – “DSAR”) in a simple and efficient way, removing the need for a physical approach to these requests which tends to be very labor thorough.

It stores the history, structures the content that is potentially relevant and deploys a representation of what it knows. All these forms the situation, while selecting subset of propositions that speaker has. It is a crucial step of mitigating innate biases in NLP algorithm for conforming fairness, equity, and inclusivity in natural language processing applications. Natural Language is a powerful tool of Artificial Intelligence that enables computers to understand, interpret and generate human readable text that is meaningful. In Natural Language Processing the text is tokenized means the text is break into tokens, it could be words, phrases or character. The text is cleaned and preprocessed before applying Natural Language Processing technique.

Luong et al. [70] used neural machine translation on the WMT14 dataset and performed translation of English text to French text. The model demonstrated a significant improvement of up to 2.8 bi-lingual evaluation understudy (BLEU) scores compared to various neural machine translation systems. Overload of information is the real thing in this digital age, and already our reach and access to knowledge and information exceeds our capacity to understand it. This trend is not slowing down, so an ability to summarize the data while keeping the meaning intact is highly required. Event discovery in social media feeds (Benson et al.,2011) [13], using a graphical model to analyze any social media feeds to determine whether it contains the name of a person or name of a venue, place, time etc. Here the speaker just initiates the process doesn’t take part in the language generation.

Even though the second response is very limited, it’s still able to remember the previous input and understands that the customer is probably interested in purchasing a boat and provides relevant information on boat loans. Natural Language Processing plays an essential part in technology and the way humans interact with it. Though it has its limitations, it still offers huge and wide-ranging advantages to any business.

If you feed the system bad or questionable data, it’s going to learn the wrong things, or learn in an inefficient way. Though natural language processing tasks are closely intertwined, they can be subdivided into categories for convenience. Social media monitoring tools can use NLP techniques to extract mentions of a brand, product, or service from social media posts. Once detected, these mentions can be analyzed for sentiment, engagement, and other metrics. This information can then inform marketing strategies or evaluate their effectiveness.

It is because a single statement can be expressed in multiple ways without changing the intent and meaning of that statement. Evaluation metrics are important to evaluate the model’s performance if we were trying to solve two problems with one model. Spelling mistakes and typos are a natural part of interacting with a customer. Our conversational AI uses machine learning and spell correction to easily interpret misspelled messages from customers, even if their language is remarkably sub-par. Our conversational AI platform uses machine learning and spell correction to easily interpret misspelled messages from customers, even if their language is remarkably sub-par. The fifth step to overcome NLP challenges is to keep learning and updating your skills and knowledge.

Earlier machine learning techniques such as Naïve Bayes, HMM etc. were majorly used for NLP but by the end of 2010, neural networks transformed and enhanced NLP tasks by learning multilevel features. Major use of neural networks in NLP is observed for word embedding where words are represented in the form of vectors. Initially focus was on feedforward [49] and CNN (convolutional neural network) architecture [69] but later researchers adopted recurrent neural networks to capture the context of a word with respect to surrounding words of a sentence.

Their pipelines are built as a data centric architecture so that modules can be adapted and replaced. Furthermore, modular architecture allows for different configurations and for dynamic distribution. Using these approaches is better as classifier is learned from training data rather than making by hand. The naïve bayes is preferred because of its performance despite its simplicity (Lewis, 1998) [67] In Text Categorization two types of models have been used (McCallum and Nigam, 1998) [77]. But in first model a document is generated by first choosing a subset of vocabulary and then using the selected words any number of times, at least once irrespective of order.

It helps to calculate the probability of each tag for the given text and return the tag with the highest probability. Bayes’ Theorem is used to predict the probability of a feature based on prior knowledge of conditions that might be related to that feature. The choice of area in NLP using Naïve Bayes Classifiers could be in usual tasks such as segmentation and translation but it is also explored in unusual areas like segmentation for infant learning and identifying documents for opinions and facts. natural language processing challenges Anggraeni et al. (2019) [61] used ML and AI to create a question-and-answer system for retrieving information about hearing loss. They developed I-Chat Bot which understands the user input and provides an appropriate response and produces a model which can be used in the search for information about required hearing impairments. The problem with naïve bayes is that we may end up with zero probabilities when we meet words in the test data for a certain class that are not present in the training data.

It enables robots to analyze and comprehend human language, enabling them to carry out repetitive activities without human intervention. Examples include machine translation, summarization, ticket classification, and spell check. Wiese et al. [150] introduced a deep learning approach based on domain adaptation techniques for handling biomedical question answering tasks.

Hidden Markov Models are extensively used for speech recognition, where the output sequence is matched to the sequence of individual phonemes. HMM is not restricted to this application; it has several others such as bioinformatics problems, for example, multiple sequence alignment [128]. Sonnhammer mentioned that Pfam holds multiple alignments and hidden Markov model-based profiles (HMM-profiles) of entire protein domains. The cue of domain boundaries, family members and alignment are done semi-automatically found on expert knowledge, sequence similarity, other protein family databases and the capability of HMM-profiles to correctly identify and align the members.

This article contains six examples of how boost.ai solves common natural language understanding (NLU) and natural language processing (NLP) challenges that can occur when customers interact with a company via a virtual agent). In this evolving landscape of artificial intelligence(AI), Natural Language Processing(NLP) stands out as an advanced technology that fills the gap between humans and machines. In this article, we will discover the Major Challenges of Natural language Processing(NLP) faced by organizations.…

About Leslie Parrish

About Leslie Parrish – Leslie Parrish adalah nama samaran untuk multi-diterbitkan, penulis roman pemenang penghargaan Leslie Kelly.

Penulis lebih dari tiga puluh novel roman kontemporer yang ringan dan seksi, buku Leslie Parrish barunya adalah eksplorasi cinta genre keduanya – ketegangan gelap.

Menyerah pada sisi gelapnya telah menjadi mimpi yang menjadi kenyataan yang mengasyikkan, dan dia berharap untuk dapat terus berjalan di balok keseimbangan antara kesenangan yang seksi dan menyenangkan dan sensasi gelap yang berbahaya.

Leslie tinggal di Maryland bersama suaminya Bruce dan ketiga putri mereka.

T: Anda juga menulis sebagai Leslie Kelly.

Bagaimana Anda bisa beralih dari menulis roman kontemporer yang super seksi dan lucu menjadi ketegangan yang begitu kelam dan berpasir?

A: Saya selalu menjadi pembaca setia dan memiliki selera yang sangat bervariasi dalam hal buku.

Untuk semua yang saya suka penulis roman lucu seperti Susan Elizabeth Phillips dan Jennifer Crusie, saya sama-sama liar tentang Stephen King dan Peter Straub.

Bahkan sesuatu yang Anda sukai pada akhirnya bisa sedikit melelahkan, jadi setelah menulis lebih dari tiga puluh novel roman seksi yang menyenangkan, saya benar-benar ingin mencoba menantang diri saya sendiri sebagai penulis dan menjelajahi sisi gelap imajinasi saya.

Dan buku Leslie Parrish lahir.…

Pitch Black

Pitch Black – Setelah penyelidikan yang gagal membuatnya terluka dan dipermalukan, Agen Khusus Alec Lambert terpaksa dipindahkan ke tim Wyatt Blackstone.

Mantan profiler ini telah kehilangan keunggulannya, terkubur oleh rasa bersalah yang dia rasakan atas kematian agen lain.

Tetapi dia akan membutuhkan semua keahliannya ketika dia menyadari bahwa dia mendapatkan celah lain pada kasus yang menghantuinya.

Seorang pembunuh berantai yang dikenal sebagai Profesor sekarang menggunakan skema e-mail terbaru untuk memikat korbannya dan Black CAT sedang mengejarnya.

Samantha Dalton tidak berniat menjadi seorang main hakim sendiri online, sampai neneknya ditipu dari semua yang dia miliki.

Mengabdikan dirinya untuk mengungkap penipuan dan mencegah tragedi terjadi pada keluarga lain, Sam telah mendapatkan ketenaran dan kesuksesan dengan situs webnya dan sebuah buku yang baru dirilis.

Seorang pertapa sejak perceraiannya yang buruk, Sam benar-benar tidak ingin dunia luar mengganggu privasinya.

Terutama tidak ketika dunia luar itu adalah agen FBI seksi yang mengatakan kepadanya bahwa dia memiliki koneksi dunia maya dengan seorang remaja laki-laki yang terbunuh.

Ketika si pembunuh membuka jalur komunikasi dengan Sam melalui situs webnya, Alec dan timnya meminta bantuannya untuk menghentikannya.

Namun, ada satu hal yang mereka tidak tahu.

Profesor tidak hanya melihat Sam Dalton sebagai musuh online anonim. Dia, pada kenyataannya, adalah penggemar nomor satu.

Dia telah mengawasinya, menunggu waktu yang tepat untuk bergerak. Dia hanya tidak yakin apa gerakan itu.

ULASAN:

“Dengan penulisan yang sangat baik dan pengembangan karakter yang sejalan dengan plotnya yang cekatan, PITCH BLACK adalah pemenang yang pasti.” – Ulasan Romantis Hari Ini

“Anyaman plot yang elegan, penuh dengan aksi dan drama psikologis, membuat serial dinamis ini memesona. 4 1/2 bintang” – Romantic Times

“Leslie Parrish adalah seorang jenius ketegangan romantis. Pitch Black, Buku #2 dalam seri Black CAT-nya, sama kencang dan luar biasa ditulisnya seperti yang pertama…. Jangan lewatkan yang satu ini!” – Pembaca Untuk Pembaca Ulasan…

Black at Heart

Black at Heart – Bahkan saat ia mengabdikan dirinya untuk menangkap penjahat paling tercela di Internet,

Wyatt Blackstone telah menjadi ahli dalam melepaskan diri dari pekerjaannya.

Tapi setelah kehilangan agen muda rentan yang sangat dia sayangi, kendali dingin Wyatt yang terkenal mulai retak.

Dia mendapati dirinya dihantui oleh citra cantiknya, terutama sekarang seseorang telah mulai memilih pelaku kejahatan, dengan satu pembunuhan brutal demi satu.

Karena petunjuknya mengarah pada tersangka yang sama sekali tidak mungkin: wanita yang menurut dunia telah pergi selamanya.

Sejauh korban pembunuhan pergi, Dr. Todd Fuller, di permukaan,

tampaknya bukan kandidat yang mungkin akan dipotong menjadi pita di sebuah motel yang tidak diketahui di antah berantah.

Seorang dokter gigi terhormat dari Scranton, dia punya istri, rumah mahal, mobil bagus, praktik yang berkembang pesat, tidak ada catatan kriminal.

Kehidupan yang mempesona, sebenarnya.

Tidak ada yang menarik tentang dia sekarang.

Wyatt mengamati pemandangan dari ambang pintu,

bertanya-tanya mengapa dia masih memiliki kapasitas untuk terkejut dengan apa yang mampu dilakukan manusia terhadap sesamanya.

Setelah semua yang dia saksikan sepanjang hidupnya, termasuk beberapa ingatannya yang paling awal, dia seharusnya tidak cemas bahwa hal-hal seperti itu mungkin terjadi.

Namun dia mendapati dirinya harus memejamkan mata, mengambil waktu sejenak untuk bersiap, sebelum memasuki ruangan.

Karena adegan seperti ini biasanya dikhususkan untuk film-film bengkok yang memberikan teror kepada massa.

Bukan dunia nyata.…

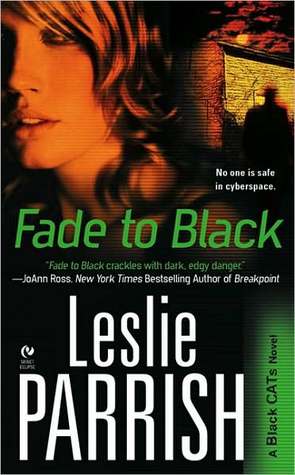

Fade To Black

Fade To Black – Dean Taggert, mantan polisi jalanan yang berubah menjadi agen FBI, telah menerima transfer ke CAT baru karena satu alasan: dia perlu menghilangkan kekerasan dari hidupnya untuk membuat mantannya memberinya lebih banyak waktu dengan putranya.

Tidak mudah dilakukan ketika dia didorong ke dalam penyelidikan paling gelap dan paling kejam dalam karirnya.

Seorang psikopat yang menyebut dirinya Reaper sedang melelang pembunuhan di klub cyber menyimpang bernama Satan’s Playground, dan Dean dan timnya dipaksa untuk menyaksikan kejahatan brutal si pembunuh secara online.

Stacey Rhodes bahagia di kota kecilnya yang tenang dan mengantuk di Hope Valley, Virginia, di mana dia mengambil alih sebagai Sheriff karena kesehatan ayahnya yang buruk.

Sepertinya tidak banyak yang terjadi di sini, kecuali hilangnya gadis nakal kota secara misterius setahun yang lalu. Jadi dia terkejut ketika seorang agen FBI yang seksi dan merenung masuk ke dunianya, membawa bukti bahwa gadis lokal yang hilang itu adalah korban dari seorang pembunuh berantai.

Yang lebih mengejutkan, pembunuh berantai itu mungkin seseorang yang dia kenal.…

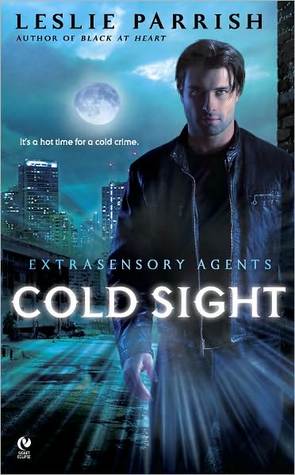

Extra Sensory Agents

Extra Sensory Agents – Setelah dijadikan kambing hitam dalam penyelidikan yang gagal yang menyebabkan kematian seorang anak, Aidan McConnell menjadi pertapa.

Namun, sebagai bantuan kepada seorang teman lama, Aidan akan membantu pada kasus XI sesekali.

Tetapi di balik wajahnya yang tampan dan kasar, dia menahan emosinya – karena takut terbakar lagi.

Reporter Lexie Nolan memiliki hidung untuk berita – dan dia percaya seorang pembunuh berantai telah menargetkan gadis remaja di sekitar Savannah.

Tapi tidak ada yang percaya padanya. Jadi dia meminta bantuan agen detektif paranormal baru dan Aidan yang seksi dan misterius.

Tetapi ketika keduanya mulai menjalin hubungan, kasusnya berubah menjadi sangat pribadi untuk Lexie – dan Aidan menemukan bahwa mungkin dia tidak kehilangan kemampuan untuk merasakan…

Fakta Dan Fiksi Buku: Musim Panas Yang Aneh di Tahun 2020

Fakta Dan Fiksi Buku: Musim Panas Yang Aneh di Tahun 2020 – Tahun ini, mungkin tidak seperti sebelumnya, kebiasaan membaca kita mencerminkan realitas genting kita.

Ketika dunia telah kacau melalui pandemi yang mematikan dan perhitungan rasial di bawah awan kelelahan dan ketakutan, kami telah menggunakan buku untuk melarikan diri dari masa kini, menginformasikan keyakinan kami dan mendidik anak-anak kami yang tinggal di rumah. sbobetonline

Kami telah menemukan katarsis dalam fiksi ilmiah apokaliptik dan kenyamanan dalam romansa; saran dalam panduan swadaya dan momen damai, berkat buku aktivitas anak-anak.

Yang paling mencolok, sejak kematian George Floyd pada bulan Mei, kami berbondong-bondong ke buku-buku tentang ras dan keadilan sosial.

Data yang dikumpulkan dari penerbit, perpustakaan, asosiasi, firma data, dan pembaca situs web kami memberikan gambaran tentang tren buku selama musim semi dan musim panas 2020.

Bersama-sama, pilihan sastra ini mencerminkan suasana hati kolektif kita.

The Washington Post bertanya kepada pembaca pada awal Mei dan pertengahan Agustus tentang buku-buku yang sesuai dengan mereka. Berikut ini berasal dari lebih dari 1.600 kiriman.

Pada bulan Mei, lima penulis yang paling banyak dibaca adalah:

1. Erik Larson

“Selain menjadi sejarah Inggris yang sangat baik pada awal Perang Dunia Kedua, itu adalah deskripsi yang sangat baik tentang kepemimpinan yang hebat selama masa-masa yang sangat sulit,” tulis Steve Pascale tentang The Splendid and the Vile.

2. Hillary Mantel

Judith Chopra di The Mirror & the Light: “Ini memungkinkan saya pergi ke tempat lain – saya memberi tahu keluarga saya untuk tidak mengganggu saya di abad ke-16 – karena itu benar-benar imersif.”

“Saya harus menjatah diri saya sendiri sehingga saya tidak menyelesaikannya terlalu cepat.”

3. Emily St. John Mandela

“Station Eleven adalah buku favorit saya tahun 2014, dan kembali ke sana selama pandemi anehnya menghibur,” tulis Melissa Stevenson.

“Novel ini berdenyut dengan hati humanis yang berdetak, dan meminta kita untuk mempertimbangkan tidak hanya untuk tetap hidup, tetapi juga menemukan hal-hal dan orang-orang untuk hidup.”

4. Amor Towles

“Ini menunjukkan bagaimana seluruh dunia bisa eksis dalam empat dinding,” tulis Barbara Doran tentang A Gentleman in Moscow.

“Sekarang, banyak dari kita terjebak di rumah kita; kita bisa menjadikan mereka dunia kita.”

5. Albert Camus

“Yang sangat mengejutkan adalah bagaimana [The Plague] menggambarkan dengan sangat baik apa yang terjadi pada kita sekarang,” tulis Janice Dole.

“Saya tidak mengerti bagaimana orang-orang di masyarakat kita tidak memiliki lebih banyak pengetahuan hari ini daripada di masa lalu … Orang-orang masih bertindak berdasarkan emosi dan bukan alasan.”

Pada bulan Agustus, lima penulis yang paling banyak dibaca adalah:

1. Brit Bennett

“Saya merasa penting pada saat-saat seperti ini untuk membaca buku tentang pengalaman kulit hitam di Amerika Serikat,” tulis Diane Starke tentang The Vanishing Half.

2.Ibram X Kendi

Di Stamped From the Beginning, Tracy Spangler menulis: “Sebagai orang kulit putih, itu membuat saya marah dan malu – bahwa ini adalah kenyataan, dan bahwa saya tidak diajarkan banyak tentang itu sebagai siswa yang tumbuh di sini.”

3. Hillary Mantel

Heather Feeney berkomentar di Wolf Hall dan Bring Up the Bodies: “Membaca ini untuk pertama kalinya (dan berniat untuk segera membaca The Mirror & the Light) telah memberi saya kesempatan untuk merenungkan nilai-nilai pribadi saya dan tujuan saya sebagai pemerintah. karyawan di saat ketidakpastian, kekacauan dan bahkan kematian.”

4. Isabel Wilkerson

The Warmth of Other Suns adalah “sebuah mahakarya yang mengubah dan memperdalam pemikiran saya tentang rasisme di Amerika,” tulis Linda Kusserow.

5. Jeanine Cummins

“American Dirt adalah film thriller yang sangat menegangkan dengan pesan yang memilukan,” tulis Shelly Wiltshire.

“Saya tahu ini kontroversial, tetapi saya menemukan wawasan tentang kisah migran itu bermakna dan skenario ‘tidak ada tempat lain untuk berubah’ sangat relevan.”…

5 Judul Buku Terbaik Yang Ditulis Oleh Wanita

5 Judul Buku Terbaik Yang Ditulis Oleh Wanita – Penulis wanita telah menulis banyak novel paling sukses dalam sejarah – meskipun beberapa dari mereka awalnya tidak mendapatkan pujian yang layak mereka dapatkan.

Empat novel pertama oleh penulis Prancis Colette semuanya muncul dengan nama pena suami pertamanya, Willy. sbobetasia

Dia memenangkan ketenaran dan kekayaan untuk buku-buku seperti Claudine at School dan hanya setelah kematian Willy, Colette pergi ke pengadilan untuk mengkonfirmasi bahwa dia adalah satu-satunya penulis dan namanya dihapus dari sampulnya.

Pada awal karir mereka Charlotte, Anne dan Emily Bronte semua mengambil nama samaran laki-laki – Currer, Acton dan Ellis Bell masing-masing – setelah penyair pemenang Robert Southey mengatakan kepada Charlotte bahwa “sastra tidak bisa menjadi urusan kehidupan seorang wanita”.

Hati-hati juga dengan pemenang Hadiah Wanita 2020 untuk Fiksi.

Daftar terpilih diumumkan pada bulan April dan pemenangnya akan diumumkan pada bulan September, dinilai, seperti biasa, berdasarkan “aksesibilitas, orisinalitas, dan keunggulan dalam penulisan oleh wanita”.

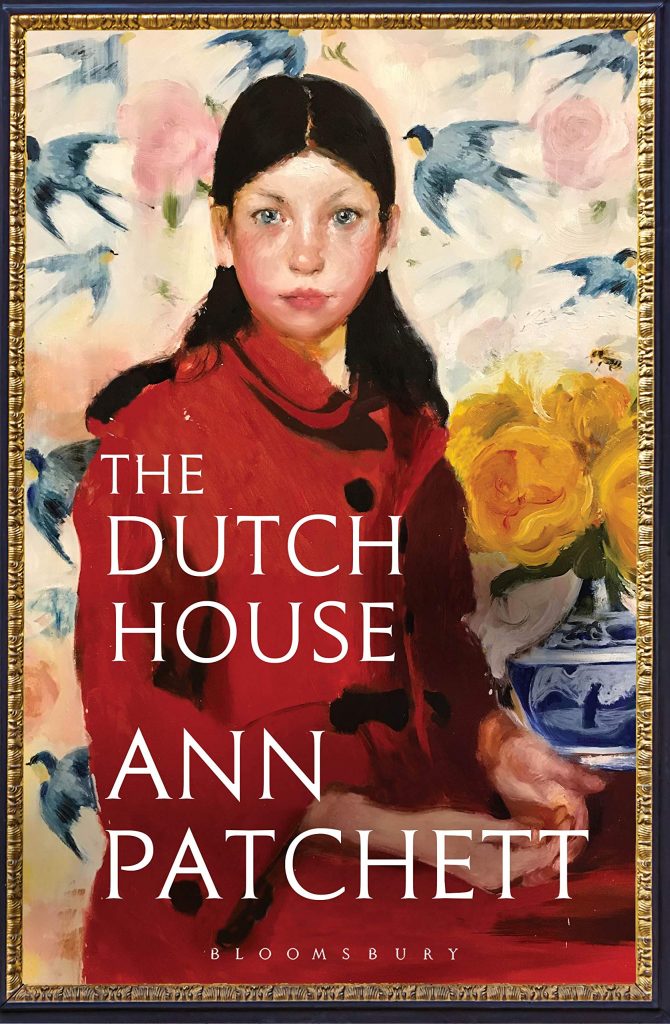

1. ‘The Dutch House’ oleh Ann Patchett, diterbitkan oleh Bloomsbury

Novel Ann Patchett tentang dua saudara kandung, rumah masa kecil mereka yang tidak biasa dan masa lalu yang tidak bisa mereka lepaskan masuk daftar panjang untuk Penghargaan Wanita 2020 untuk Fiksi dan kami terkejut itu tidak masuk daftar pendek.

Danny Conroy dan kakak perempuannya Maeve tumbuh di The Dutch House, sebuah rumah besar di pinggiran Philadelphia.

Ayah mereka, seorang raja properti buatan sendiri, adalah sosok yang jauh dan ibu mereka secara misterius keluar tetapi saudara kandungnya saling setia.

Tetapi suatu hari ayah mereka membawa pulang Andrea yang mengerikan, bersama dengan dua anak perempuan Andrea, dan Danny dan Maeve dipaksa untuk menanggung kesedihan yang lebih besar dari sebelumnya.

Patchett menulis dengan indah tentang keluarga, cinta dan kehilangan, serta ikatan kuat yang mengikat kita semua. Ini adalah novel yang tinggal di pikiran kita lama setelah kita selesai membaca.

2. ‘Remain Silent’ oleh Susie Steiner, diterbitkan oleh The Borough Press

Fans sangat menantikan angsuran ketiga dalam seri Manon Bradshaw Susie Steiner dan Remain Silent pasti pantas untuk ditunggu.

Manon, seorang inspektur detektif di kepolisian Cambridgeshire, adalah karakter yang lebih besar dari kehidupan – seringkali kasar, sering kacau tetapi hebat dalam pekerjaannya.

Ketika tubuh seorang migran muda ditemukan tergantung di pohon, tidak ada tanda-tanda perlawanan dan tidak ada indikasi bahwa kematiannya selain bunuh diri yang tragis – kecuali sebuah catatan yang disematkan di celananya, ditulis dalam bahasa Lithuania dan mengatakan tidak bisa berbicara”.

Steiner menggabungkan plot yang menarik dengan komentar sosial yang cerdik dan ini sama bagusnya dengan dua novel Manon Bradshaw pertamanya.

3. ‘The Girl With The Louding Voice’ oleh Abi Daré, diterbitkan oleh Sceptre

Abi Daré dibesarkan di Nigeria dan terinspirasi untuk menulis The Girl with the Louding Voice dengan ingatannya tentang pembantu rumah tangga muda miskin yang bekerja untuk keluarga kelas menengah di Lagos.

Adunni yang berusia empat belas tahun, pahlawan wanitanya yang bersemangat, memiliki ambisi untuk menjadi seorang guru tetapi setelah kematian ibunya yang disayangi, ayahnya memaksanya menikah dengan seorang sopir taksi lokal yang sudah memiliki dua istri dan empat anak.

Ketika tragedi menyerang dia melarikan diri suaminya dan dijual sebagai budak rumah tangga ke rumah tangga kaya di Lagos, hanya untuk menderita kekejaman yang tak terkatakan lagi.

Humor Adunni dan tekad kuat untuk mengubah takdirnya bersinar melalui novel debut yang luar biasa ini.

4. ‘Normal People’ oleh Sally Rooney, diterbitkan oleh Faber

Meskipun diterbitkan pada tahun 2018 dan menerima ulasan yang bagus pada saat itu dan telah terjual lebih dari satu juta eksemplar, Orang Normal Sally Rooney adalah salah satu novel yang paling banyak dibicarakan tahun ini – berkat adaptasi BBC baru-baru ini dengan nama yang sama.

Jika Anda menyukai dramatisasi TV, Anda harus membaca kisah Marianne dan Connell, sepasang kekasih bernasib sial yang tumbuh di kota kecil yang sama di barat Irlandia dan mencoba untuk tetap terpisah tetapi tidak bisa.

Di sekolah Connell populer dengan semua orang sementara Marianne adalah seorang penyendiri.

Situasi berubah di universitas di Dublin, di mana Marianne berkembang pesat tetapi Connell merasa sulit untuk menyesuaikan diri.

Rooney masih berusia 29 tahun tetapi dia adalah seorang penulis hebat yang pantas mendapatkan setiap pujian yang dia terima.

5. ‘Girl, Woman, Other’ oleh Bernardine Evaristo, diterbitkan oleh Penguin

Bernardine Evaristo memenangkan Booker Prize tahun lalu untuk Girl, Woman, Other, berbagi penghargaan dengan The Testaments karya Margaret Atwood.

Barack Obama menyebut novel Evaristo sebagai salah satu buku favoritnya tahun 2019 dan telah diciutkan untuk Penghargaan Wanita 2020 untuk Fiksi.

Novelnya mengikuti kehidupan yang saling berhubungan dari 12 wanita kulit hitam saat mereka menavigasi hidup di Inggris selama beberapa dekade.

Evaristo, profesor penulisan kreatif di Brunel University, menggambarkan buku itu sebagai “novel yang mengeksplorasi kelas, gender, seksualitas, keluarga, pekerjaan, dan ras dari berbagai perspektif” dan juga sebagai potret mendalam tentang kewanitaan Inggris kontemporer. juga luar biasa dibaca.…